We’ve been developing core logic of quite advanced Web Application Firewalls (WAFs) since 2013. For example, from 2013 and till 2018 we have been developing the first 3 versions of core of an enterprise grade next generation WAF Positive Technologies Application Firewall (PT AF) – that version was mentioned by Gartner in the Magic Quadrant 2015 among the visionaries.

During the years of our work in the WAF area, we learned an important lesson: there is an inevitable trade-off – WAFs are either accurate in their security checks or fast, not the both. As an example, the modern WAFs emphasize usage of high accuracy machine learning techniques to maximize detection rate and minimize false positives. Meantime, the accuracy of machine learning algorithms requires high computation and memory resource (e.g. observe complexity of the most basic algorithm – k-means). In the world of server software even O(n) algorithms are considered too slow because web servers must handle hundreds of thousands client requests per a second. As an example, H2O uses less accurate, but faster O(1), algorithm for HTTP/2 streams prioritization instead of more accurate O(log(n)) from nghttp2 library.

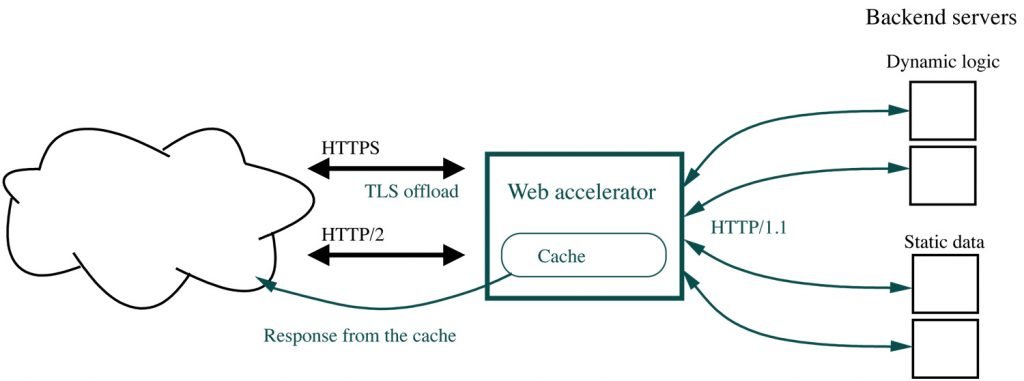

We know a similar problem with web servers running a dynamic logic -they’re too slow, so there are web accelerators which offload many simple jobs from them. A WAF accelerator works in similar way: it offloads simple security checks from more advanced WAFs, load balances traffic and protects a backend WAF cluster against DDoS attacks.

WAF performance issues

First, let’s have a look onto several and most typical WAF performance issues which might be applicable to open source WAFs. Machine learning isn’t wide used by open source WAFs and due to NDAs we can’t discuss the technical details of machine learning performance optimization tasks which we’ve done for commercial WAFs.

The WAF platform

A WAF intercepts all HTTP requests and responses and executes some sophisticated security checks against the HTTP headers and body, so it’s essentially an HTTP proxy. Nginx is frequently used as a WAF platform. At least CloudFlare, Signal Sciences, and Wallarm have announced that their WAFs work as Nginx modules. ModSecurityworks with Nginx, but was originally developed for Apache HTTPD. NAXSI, another open source WAF, is solely developed for Nginx.

Since WAFs are just modules for HTTP proxies, Nginx in most cases, then they inherit all the performance limitations of the platform.

Process model and event loop

WAFs need to execute relatively heavy logic for each HTTP request, so CloudFlare experienced issues with Nginx event loop: some requests may take long enough time for WAF inspection to fully acquire resources of all Nginx worker processes. In this case all other unrelated requests will stuck in the queue waiting for processing. This is where threading proxies like Varnish might be beneficial.

HTTP parser

Nginx has a reasonable HTTP parser implementation. But if you ever think about launching an HTTP flood attack, then you should try some more or less realistic URI. Nginx uses switch-driven HTTP parser. This is a code snippet for URI processing (the function is actually much longer):

case sw_check_uri:

if (usual[ch >> 5] & (1U << (ch & 0x1f)))

break;

switch (ch) {

case '/':

r->uri_ext = NULL;

state = sw_after_slash_in_uri;

break;

case '.':

r->uri_ext = p + 1;

break;

case ' ':

r->uri_end = p;

state = sw_check_uri_http_09;

break;

case CR:

r->uri_end = p;

r->http_minor = 9;

state = sw_almost_done;

break;

case LF:

r->uri_end = p;

r->http_minor = 9;

goto done;

case '%':

r->quoted_uri = 1;

// ...

If we try a real query to look for a hotel at Booking come with wrk traffic generator

./wrk -t 4 -c 128 -d 60s --header 'Connection: keep-alive' --header 'Upgrade-Insecure-Requests: 1' \

--header 'User-Agent: Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) \

Chrome/52.0.2743.116 Safari/537.36' --header 'Accept: text/html,application/xhtml+xml, \

application/xml;q=0.9,image/webp,*/*;q=0.8' --header 'Accept-Encoding: gzip, deflate, sdch' \

--header 'Accept-Language: en-US,en;q=0.8,ru;q=0.6' \

--header 'Cookie: a=sdfasd; sdf=3242u389erfhhs; djcnjhe=sdfsdafsdjfb324te1267dd' \

'https://192.168.100.4:9090/searchresults.en-us.html?aid=304142&label=gen173nr-1FCAEoggI46Ad \

IM1gEaIkCiAEBmAExuAEZyAEP2AEB6AEB-AECiAIBqAIDuAKAg4DkBcACAQ&sid=686a0975e8124342396dbc1b331fab24 \

&tmpl=searchresults&ac_click_type=b&ac_position=0&checkin_month=3&checkin_monthday=7&checkin_year= \

2019&checkout_month=3&checkout_monthday=10&checkout_year=2019&class_interval=1&dest_id=20015107& \

dest_type=city&dtdisc=0&from_sf=1&group_adults=1&group_children=0&inac=0&index_postcard=0&label_ \

click=undef&no_rooms=1&postcard=0&raw_dest_type=city&room1=A&sb_price_type=total&sb_travel_purpose \

=business&search_selected=1&shw_aparth=1&slp_r_match=0&src=index&srpvid=e0267a2be8ef0020&ss=Pasadena%2C

%20California%2C%20USA&ss_all=0&ss_raw=pasadena&ssb=empty&sshis=0&nflt=hotelfacility%3D107%3Bmealplan

%3D1%3Bpri%3D4%3Bpri%3D3%3Bclass%3D4%3Bclass%3D5%3Bpopular_activities%3D55%3Bhr_24%3D8%3Btdb

%3D3%3Breview_score%3D70%3Broomfacility%3D75%3B&rsf='

, then we see the HTTP parser functions in perf top profiling session:

8.62% nginx [.] ngx_http_parse_request_line

2.52% nginx [.] ngx_http_parse_header_line

1.42% nginx [.] ngx_palloc

0.90% [kernel] [k] copy_user_enhanced_fast_string

0.85% nginx [.] ngx_strstrn

0.78% libc-2.24.so [.] _int_malloc

0.69% nginx [.] ngx_hash_find

0.66% [kernel] [k] tcp_recvmsg

The functions are the performance bottleneck in this case, and performance bottlenecks are good targets for a DDoS attack.

XML parser

The HTTP parser is only begin of the story. A good WAF is about bunch of various parsers. The most obvious one is a parser for HTML. XSS, CSRF, XXE, and many other attacks’ payloads reside in an HTML/XML bodies of HTTP requests and/or responses. And a good WAF must build a DOM tree to analyze the documents. One of the fastest library LibXML2 spends 2ms to parse a document of 5KB. This means that the library is unable to parse more than 190 responses per second for this article web page, which is a bit more than 13KB in size (quite modest for the modern Web). Given that a WAF must not only parse DOM, but also analyze it, we come to a rough estimation that only HTML/XML analyzing WAF is able to process not more than 1,500 requests per second on a reasonable 8-core CPU hardware. Of course there is a lot of other complex logic which reduces the number even further.

Regular expressions

ModSecurity, the most popular open source WAF, is built around regular expressions. Regular expressions are extensively used by enterprise WAFs for virtual patching, a quick fix of a threat on the WAF side instead of fixing the vulnerable application. There are many web attacks and attackers do their best to evade detection, so WAFs use a lot of rules with very complex regular expressions. This allows a WAF to reach only 2-5% performance of an original web accelerator.

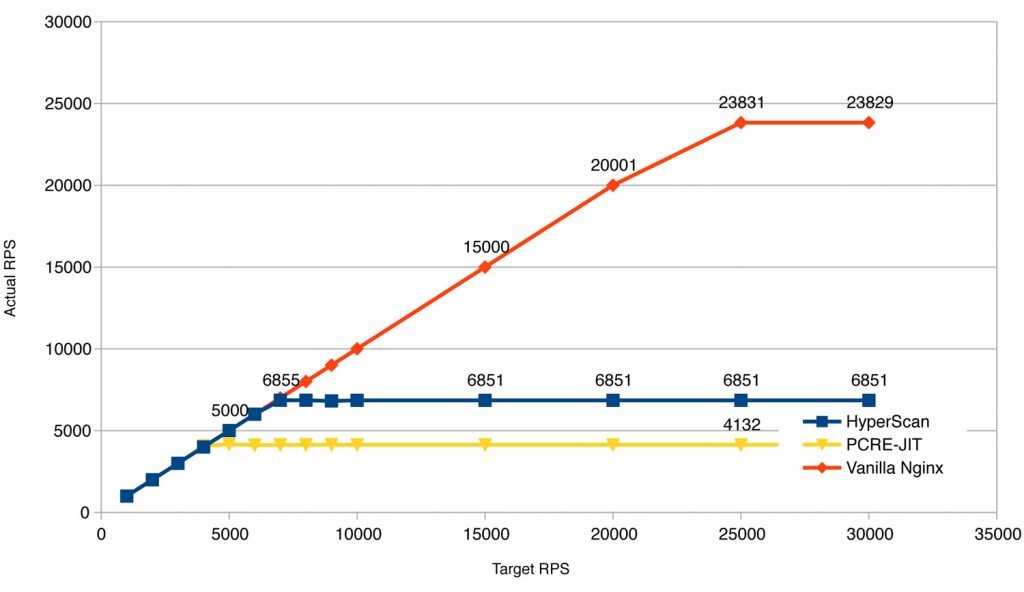

PCRE JIT significantly improves performance of the PCRE regular expression library. Intel HyperScan goes even further by applying various optimization techniques to regular expressions as well as compiling number of rules into a single finite state machine (so called multi-pattern matching).

Consider these results from our study of regular expressions performance. The figure at the below shows performance degradation of a WAF, built on top of Nginx, if it runs regular expression rules using the fastest regular expression libraries, PCRE-JIT or Intel HyperScan. While HyperScan is about 60% faster than PCRE-JIT, for the provided rule set we were able to reach only 29% performance of the original Nginx build.

Regular expressions is the classic target for asymmetric DDoS attacks. A single inaccurate regular expression rule led CloudFlare to an outage.

Web cache

Some of web accelerators use old fashined cache using plain files, e.g. Nginx, Apache HTTPD, Squid. The others use virtual memory, it seems Varnish is unique here. Apache Traffic Server uses advanced database-like cache, which typically provides better performance. All the approaches involve disk I/O. HDD and even SDD provide more space by lower price than RAM, and the space is required to deliver heavy content to clients. However, disk I/O is tightly coupled with the Linux virtual memory management (VMM), which may experience performance issues on intensive I/O due to pages replacement. You might remember how your workstation or laptop with Linux becomes unresponsive for a short period of time on massive I/O. Large production systems may experience even worse problems.

The next question is, should a WAF be installed at the front of a web cache or behind it? Web architecture best practises suggest to setup a slower application behind a faster one, so WAF should reside behind the cache. However, WAF needs to inspect as much traffic as possible to make the right correlations. Also there are the family of web cache specific attacks, e.g. Web cache poisoning, deception, and cache poisoned denial of service (CPDoS) attacks, so the web cache needs a protection. (By the way, read about CPDoS attacks prevention in our previous post.)

Since WAFs typically work as web accelerator modules, their logic processes traffic before the cache. This way the web cache performance is limited by a WAF.

Application layer DDoS attacks

Most, or even all, WAFs offer mitigation of application layer DDoS attacks. Suppose you have a powerful hardware and your WAF is able to process 20,000 requests per second. Now, you face a botnet of 1,000 hosts and each of them sends you say 200 requests simultaneously. The first request from a bot always passes the firewall (even stupid botnets like Mirai use User-Agent and other HTTP headers from normal browsers and able to solve Cookie challenge). If all the requests target the same URI, say /, then the first 1,000 requests are quickly serviced from the cache and the following 2nd or 3rd requests from each bot lead to banning the bot by the WAF. The numbers are good in this scenario.

But what if all the 1,000 bots request different heavy content from the cache? You’ll likely stuck on disk I/O, especially if your cache size is much larger than the RAM (the usual reason why web caches need to store content on disk). Or what if the botnet has 402,000 different IP addresses? The WAF will be fighting with the malicious requests for hours, or, most likely, your Linux machine will just die on system pages reclamation.

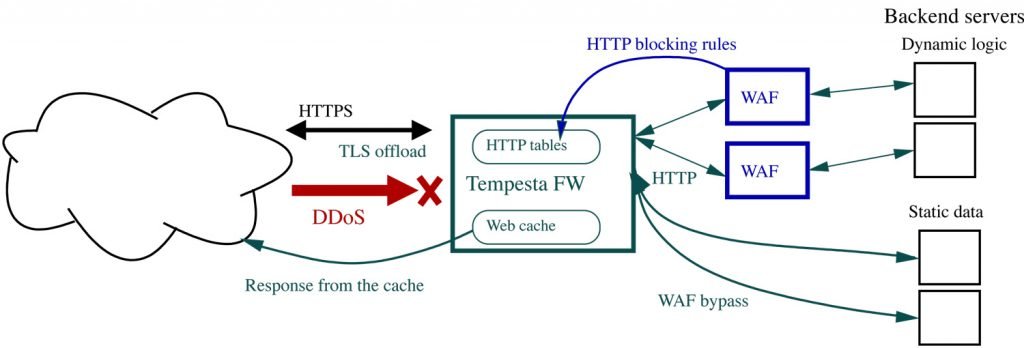

WAF acceleration

The basic idea is the same as for web acceleration: we place a featureful, but slow, WAF behind a light-weight accelerator, which offloads certain types of jobs from the WAF and protects it against normal traffic spikes as well as DDoS attacks.

We constantly develop new web security features for Tempesta FW, but it’s considered as a WAF accelerator. It’s main purpose is to handle all ingress traffic, including DDoS, and distribute pre-filtered traffic among WAF instances (if you need to build a seriosly powerful solution) and resources which might not require WAF protection (e.g. static data servers). Tempesta FW performs only fast (not so simple though!) checks, which can be done with wire speed.

Zero-copy network I/O

Tempesta FW works as part of the Linux TCP/IP stack, so there is no data copying as well as no context switches between the user and kernel spaces. It reaches the same speed as kernel bypass web servers, e.g. Seastar built on top of DPDK. But, being part of the Linux network infrastructure, it allows you to manage all your traffic with common Linux tools like tc, eBPF and XDP, tcpdump, IPtables and nftables, IPVS, and any others which you can imagine.

Thanks to the fast network I/O, Tempesta FW can handle traffic even from quite serious botnet launching an application layer DDoS attack against your web resource. Full compatibility with the Linux network I/O infrastructure allows you to employ XDP filtration against volumetric DDoS on the same box with Tempesta FW.

Fast and secure HTTP parser

We described the main technologies of our HTTP parser in our old blog posts:

The parser significantly evolved since these articles and the technical updates were covered in Fast HTTP string processing algorithms talk at SCALE 17x, March 2019 (slides). The parser not only outperforms the CloudFlare, H2O (PicoHTTPParser) , and Nginx parsers (see the benchmark details in the posts and slides), but also provides more accurate checks and flexible protection against SQL and XSS injetions.

For example, Google was attacked in 2016 with Relative Path Overwrite (RPO) with following line in the URI:

/tools/toolbar/buttons/gallery?q=%0a{}*{background:red}/..//apis/howto_guide.html

(note the anomaly characters {, }, : in the URI). Tempesta FW can block such attacks by the restriction of allowed characters set in URI. To restrict characters set in URI content to only [a-zA-Z0-9/-%*], use following configuration option:

http_uri_brange 0x25 0x2A 0x2D 0x2F 0x30-0x39 0x41-5A 0x61-0x7A;

You can find more configuration and attack examples in our wiki and the slides.

Recently Wallarm posted the case “when your WAF needs its own WAF”. This is a proven practice to setup double security shields from different vendors to make sure that a threat of one of them will be protected by another. Everybody have bugs. We try all the attacks, which we can find, against Tempesta FW and this case wasn’t an exception. The request, which crashed ModSecurity, surely was blocked by Tempesta FW:

$ echo -e 'GET / HTTP/1.1\nCookie: =ffoo\nHost: test\n\n' | nc test 80

HTTP/1.1 400 Bad Request

date: Wed, 25 Mar 2020 19:27:04 GMT

content-length: 0

server: Tempesta FW/0.7.0

connection: close

$

In-kernel TLS handshakes

Tempesta FW implements its own light-weight TLS handshakes with no memory allocations in run time. The standard Linux crypto API is used to handle encryption and decryption of TLS records. Thanks to the optimized memory management, no context switches, and tight integration with the Linux TCP/IP stack, Tempesta FW is well-armored for TLS handshakes DDoS. You can find more details about Tempesta TLS in our Netdev publication. By the way, we’re going to propose the in-kernel TLS handshakes for the Linux upstream on the upcoming Netdev conference.

Multi-layer firewall

Tempesta FW’s HTTPtables define rule chains on HTTP layer to load balance and/or block HTTP requests by various HTTP fields. The key feature of the HTTP firewall is that it can read and write netfilter MARK for network packets carrying an HTTP message. This allows you to mark a packet depending on low level network rules (e.g. for a particular TCP port and/or IP address) and continue to process the rules on HTTP layer.

For example, suppose you want to filter out all HTTP requests from IP 192.168.100.1 and containing header Referer with value badhost.com/*. Firstly, you write a normal nftable rule to mark all packets from the IP address:

$ nft add rule inet filter input ip saddr 192.168.100.1 mark set 1

Next, define a default http_chain which checks the mark and if it’s equal to 1 sends the packet for processing by multi_layer_rules chain or pass to an upstream server otherwise. The multi_layer_rules checks whether the Referer header matches the pattern and blocks the request if so or passes otherwise.

srv_group backend { server 127.0.0.1:8080; }

vhost protected_host { proxy_pass backend; }

http_chain multi_layer_rules {

hdr "Referer" == “badhost.com/*” -> block;

-> protected_host; # all checks are passed

}

http_chain {

mark == 1 -> multi_layer_rules;

-> protected_host; # pass all by default

}

It’s cool to be able to write such rules, but it’s not so handy to do this by hands under a DDoS attack. Hopefully, WAFs can offload their filtration policies to Tempesta FW automatically. For example, exec action for ModSecurity can be used to add a blocking rule to HTTPtables.

Layer-1 web cache

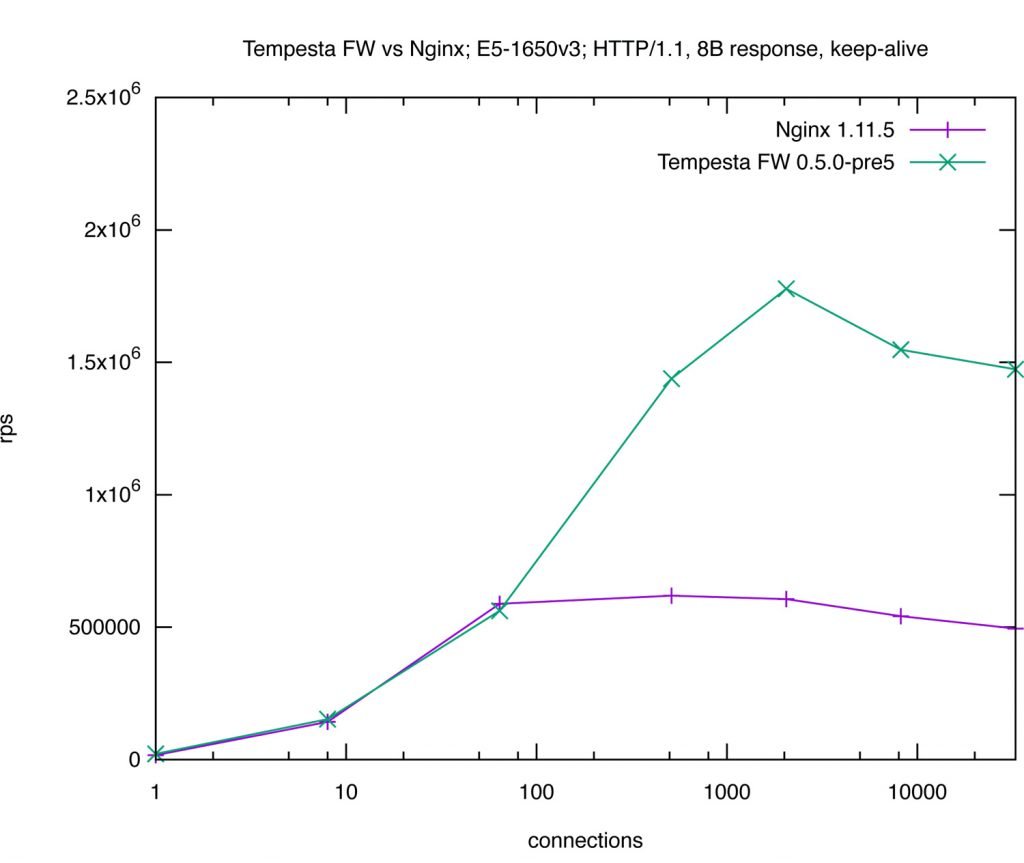

Modern CPUs have caches of 3 or 4 levels. Level 1 cache is the fastest and smallest one. Tempesta FW web cache was designed as level 1 cache – it’s very fast, but it must fit RAM. There is no disk I/O during Tempesta FW operation. The web cache serves the most hot content as fast as possibly. We compared performance of our web cache with Nginx. Tempesta FW reached 1,802,842 requests per second, which is x3 times faster than properly tuned Nginx with switched off access.log.

The largest application layer DDoS attack registered by Imperva in 2019 was 292,000 requests per second. This means that you can serve the largest application layer DDoS attack right from the cache on entry-level 4-core Xeon server. (This works for HTTP flood attacks targeting the same resource, e.g. /, which is frankly the most frequent case. For more advanced attacks Tempesta FW uses various challenges and rate limits.)

Placing a web cache at front of a WAF requires Tempesta FW to take care about all web cache related attacks and we constantly work on enhancement of the web cache security.

Conclusion

Being installed at front of a powerful WAF, Tempesta FW can significantly improve not only overall performance and response latency of your web cluster, but also increase DDoS resistance of the cluster and/or reduce total cost of ownership by using less hardware resources.

As always, we welcome you to try and test Tempesta FW alpha.

We are hiring! Look for our opportunities

Share the article